The Jagged Profile of AI in Therapy

"Will AI replace therapists?" is a red herring.

The phrase pulls attention toward a job title and away from the uneven profile of abilities underneath it. Isolated examples get overread easily. One strong response becomes evidence that therapists are doomed. One weak response becomes evidence that AI is still stupid. Dell'Acqua, Mollick, and colleagues described AI as a "jagged technological frontier," where some tasks are easy for AI while nearby tasks still fall outside its reach.

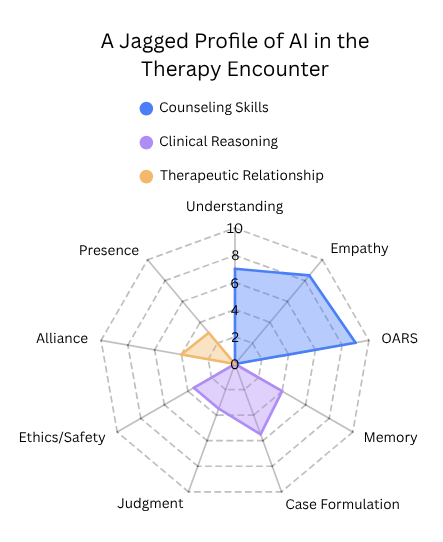

The chart here is a rough sketch of therapist-relevant capacities within the therapy encounter. The three buckets, subskills, and numbers are my shorthand for making the uneven profile visible.

An illustrative sketch of AI across therapist-relevant capacities within the therapy encounter.

Jagged Intelligence

By jagged intelligence, I mean AI does not advance across therapist-relevant abilities in one smooth, unified way. It develops an uneven profile. The chart uses three rough buckets, counseling skills, clinical reasoning, and therapeutic relationship. The short labels on the figure stand in for fuller dimensions, and the scores are benchmark-informed estimates rather than formal measurements.

Counseling skills includes listening and accurate understanding, empathic response language, and counseling microskills such as OARS. AI is likely to look strong here early. Large language models are built to predict and generate plausible language from enormous amounts of human text. That makes them unusually good at paraphrasing, matching tone, producing emotionally legible wording, and generating many possible responses very quickly. The benchmark anchors are also clearest here. HEART compares humans and LLMs in multi-turn emotional-support dialogue, CounselBench tests single-turn mental-health counseling responses, and a 2026 motivational interviewing benchmark found fair-to-good model performance on MITI-based measures. CounselBench also found that large language models often outperformed online human therapists on perceived response quality in single-turn counseling, even while expert raters still flagged recurring safety problems.

Clinical reasoning includes memory across time, case formulation, intervention judgment and timing, and ethical judgment, risk handling, and accountability. This bucket is a stranger mix. Some parts line up poorly with current language models at a basic architectural level. Memory is the clearest example. These systems do not carry forward human-like context over time. They work within context windows, and once important details fall outside that window, they can be lost, flattened, or inconsistently reintroduced. Long-context and memory benchmarks show progress, but work such as BEAM still finds that long conversations create real problems. Judgment is hard for related reasons. Good clinical judgment includes choosing among competing interpretations, tolerating uncertainty, weighing risk, knowing when not to intervene, and having a feel for what fits this person in this moment. Suicide-risk rating studies give one partial anchor for risk handling. Risk detection differs from ethical responsibility. Therapy-like research already hints at a similar split, where structured LLM counseling can look organized and method-adherent while still coming across as weaker on empathy, collaboration, and cultural understanding.

Therapeutic relationship includes alliance and rupture repair. Presence is my shorthand for felt human presence and embodied connection, including being experienced as a real other person with timing, emotional weight, nonverbal responsiveness, and shared responsibility. Alliance and presence involve being with another person over time, handling rupture and repair, carrying responsibility, and being experienced as a real other in the room. Some relational micro-behaviors can be imitated very well, but the fuller relationship bucket is less obviously aligned with what current LLM systems are built to do. Sycophancy can also look like alliance from a distance. A system that mirrors the client too readily, validates too broadly, or avoids useful friction may feel supportive while failing to do the relational work of therapy. Teletherapy research gives useful context for alliance and presence. Alliance in teletherapy remains a human clinical relationship, not an AI benchmark. Some buckets fit current model strengths better than others.

Some therapist-relevant capacities fit current AI architectures much better than others. The likely picture is lopsided gains, not one clean march toward "AI therapist" as a single achievement.

Smarter Does Not Mean Smoother

Even if AI keeps getting much more capable overall, the profile may stay uneven for a long time. The jagged frontier literature describes exactly that kind of unevenness. Better performance on some tasks does not guarantee balanced performance on nearby tasks in the same workflow. Therapy gives this problem unusually high stakes because people are tempted to infer a whole mind from a few strong verbal behaviors.

Both common reactions are too simple. Strong performance in one slice does not settle therapist competence. Weak performance in one slice does not settle irrelevance either. The profile can stay jagged while the overall capability curve rises fast.

What May Exceed Humans First

AI may exceed human performance first in selected capacities rather than whole domains. In counseling-related work, that may look like unusually strong execution in selected micro-skills. Polished empathic phrasing, fast reflections, OARS-style responses on demand, concise summaries, consistent tone, and language adapted quickly across audiences and reading levels all look plausible here.

The same pattern shows up outside psychotherapy-specific benchmarks. Ayers and colleagues found that chatbot responses to patient questions were rated higher than physician responses on both quality and empathy. Taken with the counseling benchmarks above, that does not show that AI has surpassed humans in therapy as a whole. It supports the narrower claim that selected communication behaviors can look very strong very early.

Clinical reasoning may also exceed human performance in selected ways before anyone should claim human-level therapist judgment. Speed is the obvious example. So is holding multiple candidate formulations in play, scanning a long note history when retrieval is added, or surfacing contradictions a tired clinician might miss. Those are real advantages if they become reliable. They still do not add up to the whole role.

Scaffolding Changes Capability

People often talk about AI as if they are judging the raw model. In many real systems, they are not. A user sends one prompt and sees one polished reply. Behind that reply there may be hidden instructions, retrieval, summaries, memory layers, routing, formatting constraints, and multiple prompt steps. In therapy-style systems, that behind-the-scenes structure can be doing as much work as the visible model response.

Scaffolding can make therapist-like systems more capable than a plain chatbot. Memory, retrieval, summaries, routing, prompts, and treatment-specific structure change what the system can actually do. Research comparing human peer counselors with an LLM-based CBT system showed a split profile. The LLM setup showed stronger CBT adherence and stayed closer to the treatment structure. The human peer sessions showed more empathy, collaboration, small talk, therapeutic alliance, and shared experience. System design changed what the system could do.

The Long Tail of Human Presence

Presence deserves its own category because it may have a very long tail. Even if AI keeps improving across communication and reasoning-related capacities, being with another person may remain slower-moving, or remain different in kind. A client may find an artificial system useful, structured, and even emotionally resonant, while still not experiencing that as equivalent to another human being who can witness, respond, and share responsibility.

Many clients will likely continue to prefer a human therapist even if AI becomes very strong in selected therapy-relevant functions. That preference is part of the encounter. It is bound up with alliance, trust, attachment, shame, safety, and what the person is actually seeking in treatment. The session-level counseling study points in that direction, since the human sessions were stronger on alliance-related features even when the LLM system looked more method-adherent in other ways.

AI may still surpass humans across many parts of the role. Advanced models or scaffolded systems may eventually exceed humans across many therapist-relevant domains. That possibility does not erase a long transition period where competence is uneven and human presence may keep a very long tail.

Still Not the Whole Job

The discussion so far has mostly been about the therapy encounter itself. That is only part of the therapist role. Therapy includes language, memory, formulation, judgment, alliance, ethics, repair, and presence. It also includes documentation, continuity across time, treatment planning, communication with parents or family members when relevant, coordination with schools, physicians, or insurers, and adapting care to the person's actual life circumstances. Accessibility, transportation, money, work schedules, childcare, disability, culture, and language all affect what good care requires. The replacement question keeps missing the work wrapped around the encounter. A system can look strong inside a bounded therapeutic exchange and still be far from the job.

If AI makes notes, summaries, screening review, between-session follow-up, parent updates, or school coordination easier, the therapist role may not simply shrink. In a field with unmet demand, those gains could turn into more follow-up, more coordination, more timely documentation, and more care for people who currently wait or drop through gaps. Some support may also happen outside traditional therapist hours or between sessions, where even limited help can be meaningful for people who have no timely access to care.

As selected tasks get automated, the therapist role may not shrink so much as concentrate around the parts with the longest human tail. More of the remaining work may center on judgment, responsibility, presence, rupture and repair, family communication, and adapting care to the person's actual life. In a field with high demand, that could mean more care gets delivered and therapists become busier in different ways rather than simply being replaced.

Closing

"Will AI replace therapists?" is a red herring because it collapses a complicated transition into a yes-or-no verdict and collapses time along with it. If therapist-relevant competence keeps rising while staying jagged, and if human presence has a very long tail, then full therapist replacement is probably the wrong near-term focus. AI may assist and automate parts of the therapist role, expand access for people who currently go without care, and make therapists busier than ever.

Subscribe for future posts

If you want new writing at the intersection of AI and psychology, ethics, and implementation of AI in clinical practice, subscribe on Substack.

The views expressed here are my own and do not necessarily reflect the views of any current or future employer, training site, academic institution, or affiliated organization.